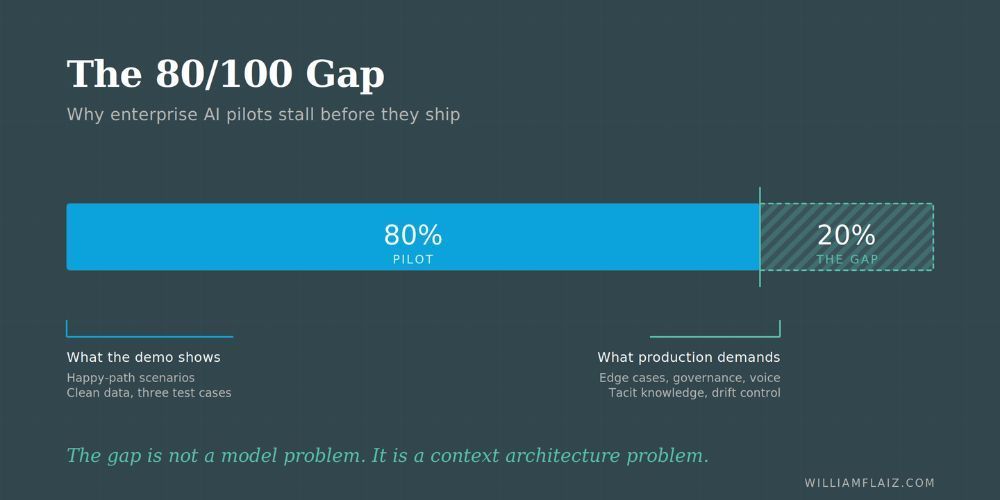

Why Your AI Pilot Stalled at 80 Percent

The context architecture problem nobody warned you about, and a framework to fix it.

You know the meeting.

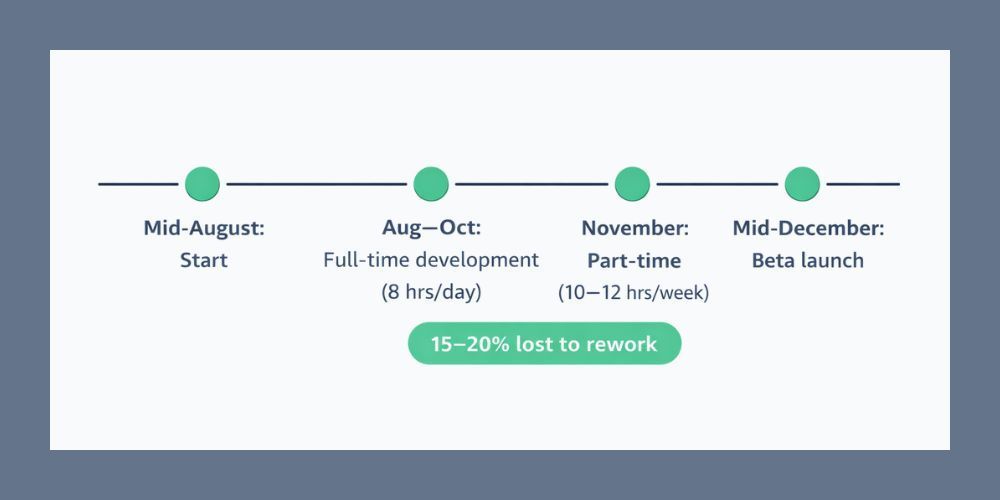

The vendor demo was sharp. The pilot hit its milestones. The executive sponsor told the board this was the quarter AI moved from experiment to production. Then something went quiet. The pilot didn't fail, exactly. It just stopped progressing. Every week brought a new edge case, a new governance question, a new workflow that almost worked but needed one more human pass before it could ship.

You are not alone, and you did not make a bad vendor choice.

Gartner's April survey of 782 infrastructure and operations leaders found that only 28 percent of enterprise AI use cases fully meet their ROI expectations. Twenty percent fail outright. The top reasons cited were poor data quality and persistent skill gaps. Neither of those is a model problem. Both are symptoms of something the market has only recently started naming.

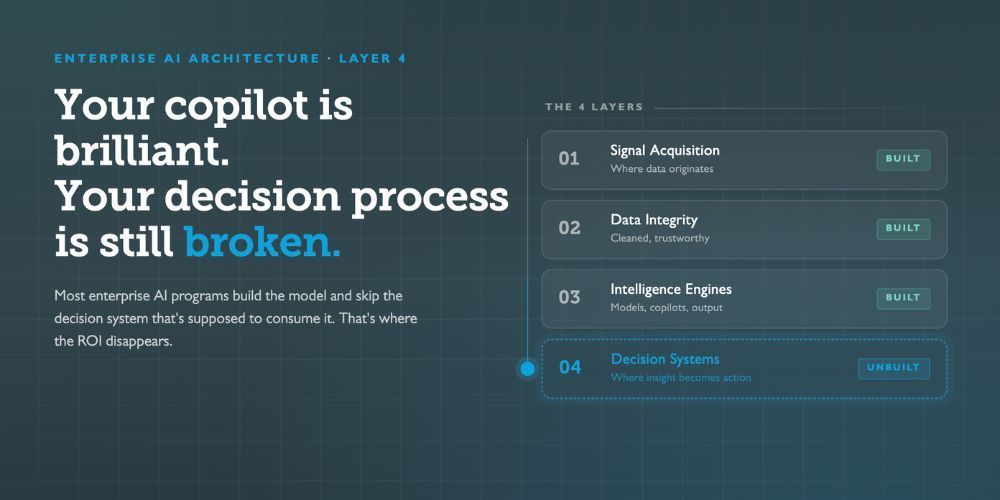

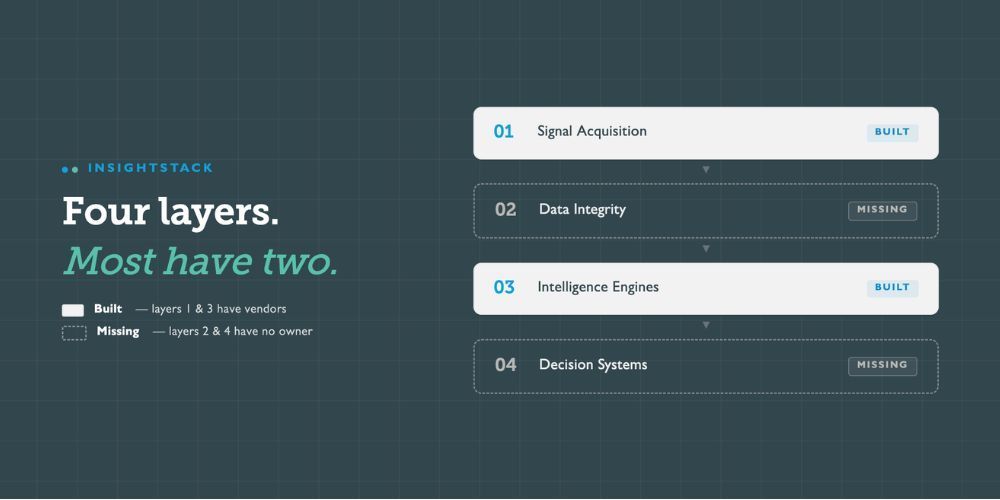

There is a layer missing between your AI vendor and your production workflow. Most organizations do not have a name for it yet. They do not have an owner for it. They are not funding it. And it is the layer that determines whether your pilot closes the last 20 percent or spends the next eighteen months grinding against it.

That layer is context architecture.

What changed in the last twelve months

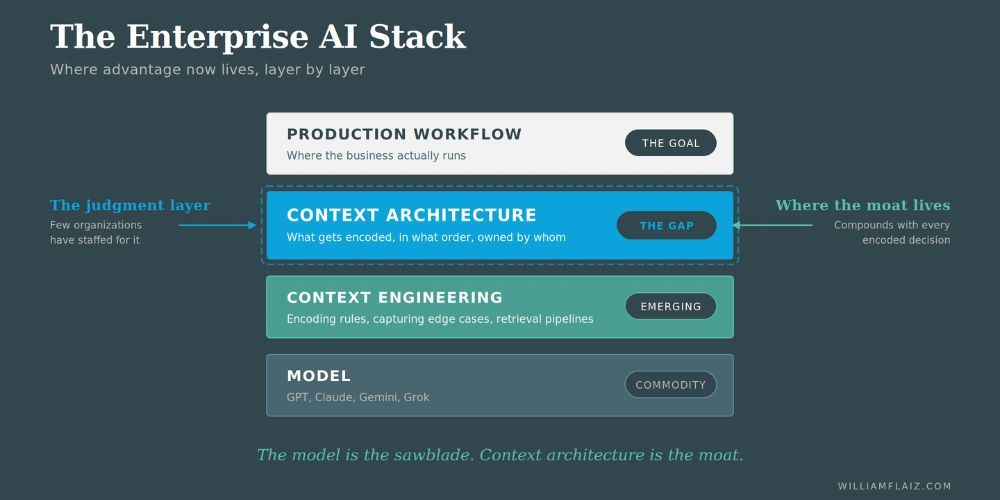

Two years ago, the enterprise AI question was which model. Today, the frontier models from OpenAI, Anthropic, Google, and xAI all perform at or above expert human level across most professional tasks. The gap between them has collapsed from years to months. The model is no longer where advantage lives.

Look at where the money is actually going. Anthropic's run rate crossed 30 billion dollars this month, and more than 1,000 enterprise customers now spend over 1 million dollars a year on Claude. None of them signed a million-dollar contract for a chatbot. They signed for Claude wired into their workflows, their data, their governance rules, and their accumulated institutional knowledge.

EY just rolled out agentic AI to 130,000 auditors across 150 countries. The framework processes over 1.4 trillion lines of journal entry data per year. The agents are useful not because EY picked the right model. They are useful because they sit on a decade of encoded EY-specific audit methodology. Any competitor with the same model access has zero chance of replicating that inside a year.

The operational work around the model is now the moat.

This creates a new category of decision that your organization probably has not staffed for. Someone needs to decide which workflows get encoded first, how tacit knowledge gets pulled out of the people who have it, where governance rules live in the stack, how edge cases feed back into the system, and who owns the work when it spans IT, operations, legal, and the business.

That set of decisions is context architecture. The people who make those decisions well are what separate AI programs that ship from AI programs that stall.

Context architecture is not context engineering

The two terms sound similar and they get confused constantly. The distinction matters.

Context engineering is the hands-on work of encoding rules, capturing edge cases, and curating what information an agent sees in the moment of a decision. It is a technical discipline. It is tedious, editorial, and essential. The people doing this work are the DevOps of the AI era, and LinkedIn data shows roles with titles like Context Engineer and AI Operations Lead growing at triple-digit rates.

Context architecture sits above that work. It is the function that decides what gets encoded, in what order, to what resolution, and how the pieces connect. It is the judgment layer. If context engineering is the craft of writing the rules, context architecture is the discipline of knowing which rules matter, which workflows to touch first, and how to sequence the program so the organization sees results in ninety days instead of eighteen months.

Most enterprises will eventually need both. Today most have neither, and the gap at the architecture layer is wider than the gap at the engineering layer, because the architecture role is new and the people who can do it well are rare.

A good context architect has to do three things that rarely show up in the same person. They have to be able to surface the tacit knowledge in an organization quickly, working alongside the long-tenured operators who actually hold it, with a methodology that does not depend on those operators also being the ones who document it. They have to understand AI systems well enough to know what is encodable now, what is encodable later, and what has to stay with humans. And they have to have the sequencing judgment to decide which domains get touched first and which exceptions matter enough to encode before the program can produce results.

This is why most organizations are better served by an architect who has done this work across multiple environments than by an insider trying to do it alongside their day job. The insider knows the content. The architect knows the extraction and the sequencing. The program works when the two operate together.

Without that architect, the context engineering work still happens, but it happens in a dozen pockets of the organization at once, with no coherence, no priority, and no integration. You end up with encoded rules that contradict each other, governance that sits outside the system instead of inside it, and agents that perform well in the domain where the loudest team encoded rules first and poorly everywhere else.

The diagnostic

The questions below are designed to surface whether your organization has a context architecture function, whether it is positioned correctly, and whether the people doing the work have the conditions they need to succeed. Answer honestly. A no is not a failure. It is a signal about what to fix next.

1. Is there a single named owner for context architecture across your AI initiatives, reporting at the right level?

If you cannot name one person with this responsibility, or if the responsibility is split across three people who all think one of the others is doing it, you do not have context architecture. You have a committee. A yes here means the owner has real authority over sequencing decisions, has budget to hire context engineers underneath them, and reports close enough to the CDO or CIO to move fast when a business unit needs to be told their domain is not first in line.

2. Has your organization identified which workflows generate the most value from encoded tacit knowledge, and sequenced the encoding work accordingly?

Most AI programs pick their pilots based on which business unit is loudest, which vendor is most available, or which use case is most visible. A mature program picks based on where encoded tacit knowledge produces the highest leverage. If your pilot list was assembled by demand rather than by leverage, you are optimizing for the wrong signal. A yes here means you have a written ranking, the ranking is tied to measurable business outcomes, and the teams working on sequenced priorities know why they were chosen.

3. Do the people holding the tacit knowledge in your organization have a defined role in the encoding process, and is their time protected for it?

The person who knows the twelve exceptions nobody wrote down is usually your most valuable operator. They are also the person with the least free time. If the context encoding work is happening without them, or is happening in late-night catch-up sessions around their real job, you will end up with encoded rules that look right on paper and fail in production. A yes here means the tacit knowledge holders have named hours on their calendar for this work, their managers know the work is strategic, and the encoding sessions are structured enough that the holder can contribute without preparing a deck.

4. When your agents need to reason over authoritative information, does the retrieval layer pull from a canonical source of truth, or does it pull from whatever documents were available when the pilot was built?

The pilot usually works because someone loaded the right PDFs into the right folder. Production fails because those PDFs go stale, get duplicated, get updated by a different team, and nobody has decided which version is the one the agent should trust. A yes here means you have a named canonical source for each domain the agent reasons over, a process for updating that source, and a retrieval layer that points at it rather than at whatever was convenient.

5. Are your governance rules encoded inside the system the agent reasons over, or are they applied as a review pass after the agent produces output?

If your governance is a human review step, your agent is not governed. It is supervised. Supervision does not scale. A yes here means the compliance rules, brand rules, and legal constraints are encoded as part of the context the agent operates inside, with human review reserved for the genuinely novel cases rather than for catching the ones the system should have handled on its own.

How to read your answers

Count your yes answers across all five questions.

Four or five yes. You have a functioning context architecture. The work now is to deepen it, extend it to additional domains, and build the feedback loops that let it compound. You are already ahead of the market.

Two or three yes. You have pieces of the function, but the gaps are likely blocking your pilots from reaching production. The highest-leverage move is usually to close the organizational gaps first. Technical gaps rarely resolve while organizational gaps remain open.

Zero or one yes. You do not have context architecture yet. This is the most common result, and it is not a crisis. It is a scoping conversation. The first step is naming the function, deciding where it reports, and identifying the two or three workflows where getting it right in the next ninety days would change the trajectory of your program

What happens in organizations that take this seriously

The organizations closing the 80/100 gap right now share a pattern. They stopped treating AI as a procurement decision and started treating it as an operational discipline. They named the context architecture function, they put it at a level where it could make sequencing calls without permission, and they accepted that the encoding work is slow, editorial, and worth the time.

The gap between those organizations and the rest is widening every quarter. The companies that picked the right context architecture in 2025 and 2026 will spend the next decade compounding advantage on top of it. The companies still debating which model to standardize on will spend the same decade buying pilots that never ship.

You know which side of that line your organization is on. The diagnostic above tells you where to start.

What is context architecture, in plain terms?

Context architecture is the function inside an organization that decides what institutional knowledge gets encoded for AI agents, in what order, to what level of detail, and how the pieces connect across governance, data, and workflows. It is the judgment layer above the hands-on work of context engineering.

How is this different from having a good AI strategy?

AI strategy answers the question of where AI should apply in the business. Context architecture answers the question of how to actually make it work once the strategy has picked the targets. Most organizations have the first. The gap at 80 percent is almost always a missing second.

Who should own context architecture in an organization?

The function should have a single named owner, reporting close enough to the CDO or CIO to move on sequencing decisions without building consensus across business units. Organizations are usually better served by an architect who has done this work across multiple environments than by an insider trying to layer it on top of a day job. The insider knows the content. The architect knows the extraction and the sequencing. The program works when the two operate together.

Author: William Flaiz