Why Enterprise AI Pilots Stall Before Production

Why 70% of Enterprise AI Projects Stall Before Production

The demo was flawless.

The model performed exactly as promised. The stakeholders were impressed. The business case cleared legal review. Leadership signed off on the next phase.

Then... nothing. Six months later, the pilot is quietly archived. The vendor relationship cools. A new initiative kicks off somewhere else in the organization, and the cycle starts again.

This isn't an edge case. It's the default outcome for enterprise AI.

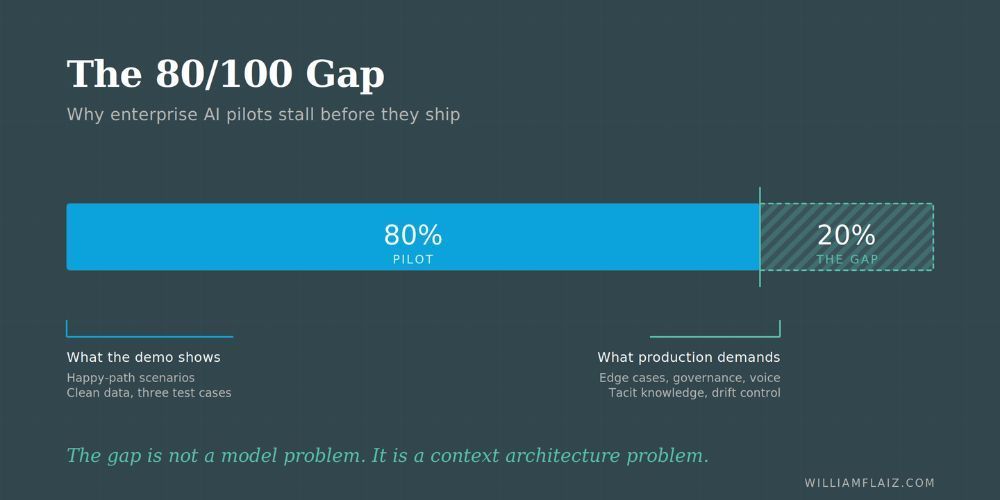

Depending on which study you read, somewhere between 60 and 80 percent of enterprise AI initiatives fail to reach production scale. The number gets tossed around so frequently it's started to feel abstract. But there's a specific reason these projects die, and it's almost never the one organizations blame.

It's not the technology. It's not the model. It's not even the budget.

It's structure.

Why the Blame Always Lands in the Wrong Place

After the pilot stalls, organizations tend to do a quick postmortem and land on familiar conclusions: the data wasn't ready, the vendor oversold, the timing was off. Sometimes those things are true. But they're symptoms, not causes.

The actual failure happens earlier. Often before the pilot even starts.

Most organizations approach enterprise AI the way they approached cloud migration a decade ago: acquire the capability, run a proof of concept, hand it off to operations, and declare victory. That sequence works fine for infrastructure. It breaks down completely when the output of the system isn't a server or a database but a recommendation, a prediction, or a decision.

AI doesn't hand off. It has to be wired in.

Three structural failure modes show up repeatedly in organizations struggling to move AI from pilot to production. They're not glamorous, and they're not the kind of thing that shows up in vendor case studies. But they're responsible for the vast majority of stalled initiatives.

Failure Mode 1: Data Quality as an Afterthought

No one admits they have a data quality problem until an AI system exposes it.

The pilot runs on a curated dataset. The data team pulls clean records, reconciles the duplicates, flags the anomalies. The model performs beautifully because it's working on a version of the organization's data that doesn't exist in production.

Then the initiative moves toward scale. The model encounters the real data infrastructure: years of inconsistent CRM entries, fields populated differently across business units, customer records that exist in three systems and match in none. The model doesn't fail dramatically. It just becomes quietly wrong. Confidently, consistently wrong.

The organizations that scale AI successfully treat data integrity as infrastructure, not cleanup. They've built systematic processes for maintaining data quality before they need AI, not after. That distinction sounds minor. The downstream impact is not.

Checkout: Your Competitors Are Automating. You're Still Cleaning Data Manually

Failure Mode 2: The Governance Vacuum

Here's a scenario that plays out more often than it should.

The AI system produces an output. A recommendation, a risk flag, a predicted customer action. And then... the output sits in a dashboard. Nobody owns it. Nobody acts on it. Nobody has been designated to decide what happens when the model is right, or what happens when it's wrong.

Governance in AI implementation isn't about ethics committees or compliance frameworks, though those matter. It's about something more immediate: who is accountable for AI-assisted decisions inside the organization, and how does accountability change when something goes wrong?

Most enterprises are extraordinarily good at assigning accountability for human decisions. They've built decades of process around it. They're much less practiced at handling decisions where a model contributed to the outcome.

Without clear ownership of AI outputs, those outputs get treated as interesting observations rather than operational inputs. The system runs. Nobody changes their behavior. The initiative loses its business case because it never actually changed anything.

Failure Mode 3: Missing the Decision Layer

This is the most common and the least discussed failure mode.

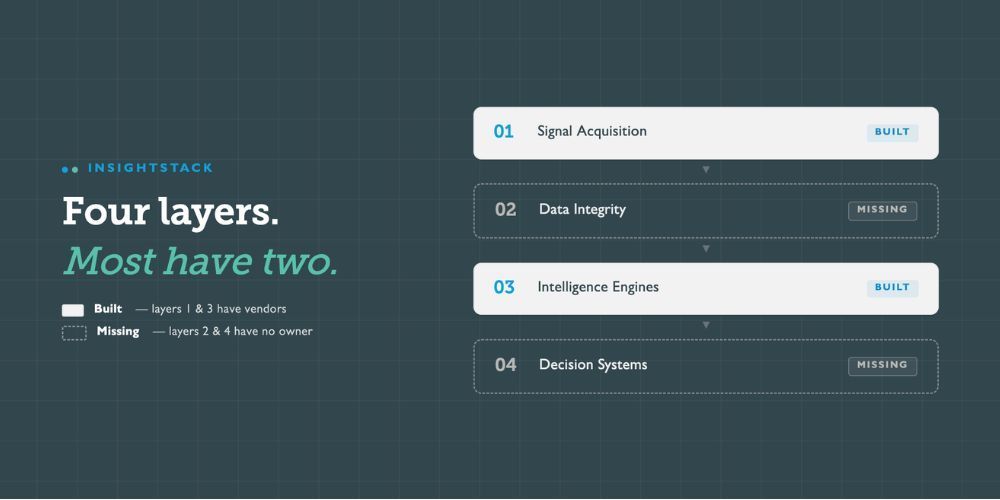

Organizations invest heavily in the first two layers of an AI architecture: signal acquisition (getting data in) and intelligence engines (running models against it). What they consistently underinvest in is the decision layer, the part of the system that translates model output into operational action.

A model that predicts customer churn with 87% accuracy is impressive. A model that predicts churn, routes the alert to the right account manager, surfaces the relevant context alongside the alert, and then tracks whether intervention happened and whether it worked, that's a production system.

The difference between those two things isn't model sophistication. It's architecture. Specifically, whether the organization has built the infrastructure to connect intelligence to action.

Most pilots demonstrate the intelligence. They skip the action layer entirely. When the initiative scales, there's no infrastructure to receive the output and do anything useful with it.

Checkout: Top Metrics for Measuring Digital Transformation Success

What Separates the Organizations That Actually Ship

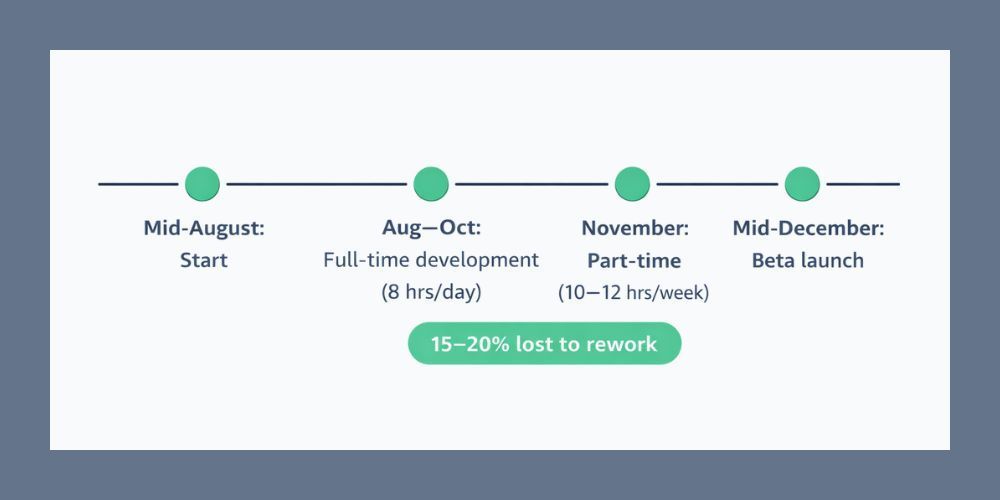

The organizations consistently moving AI from pilot to production share a few patterns worth noting.

They start with the decision, not the model. Before selecting a vendor or designing a proof of concept, they map exactly what decision the AI system will inform, who makes that decision today, how it will be made differently with AI in the loop, and what changes in the surrounding process. The model gets selected after that conversation, not before.

They treat data quality as a precondition, not a parallel workstream. By the time an AI initiative enters pilot, they've already audited the data it will depend on in production. Not a sample. The actual production data environment.

They build governance into the pilot itself. Accountability structures, escalation paths, and performance review processes get designed during the pilot phase so they're operational by the time the initiative scales.

None of this is technically complex. All of it requires organizational discipline that's harder to sustain than it sounds.

The Honest Question Worth Asking

Before the next AI initiative kicks off, it's worth asking one question that rarely appears in vendor presentations or internal pitch decks.

If this pilot succeeds, what changes in how this organization makes decisions?

If the answer is vague, the initiative will stall. Not because the technology failed, but because nobody designed the organizational infrastructure to use it.

The technology is ready. The models are capable. The thing keeping enterprise AI stuck in pilot purgatory is almost never the AI.

It's the judgment required to wire it into how the organization actually works.

Key Takeaways

- Data quality problems surface in production, not in pilots. Treat data integrity as infrastructure before the initiative begins.

- AI governance isn't a compliance exercise. It's about assigning clear ownership for AI-assisted decisions and their outcomes.

- The decision layer is where most AI architectures are incomplete. Intelligence without operational integration produces dashboards, not results.

Why do AI pilots fail even when the technology works?

The technology rarely causes the failure. Most pilots stall because of structural gaps: unresolved data quality in production environments, no governance framework for AI-assisted decisions, and missing architecture connecting model output to operational action.

What is the most common reason enterprise AI doesn't scale?

The absence of a decision layer. Organizations invest in data acquisition and model development but skip the infrastructure that translates AI output into specific, accountable organizational action. Without that layer, model outputs accumulate in dashboards nobody acts on.

How do we know if our organization is ready to move an AI pilot to production?

Three questions to pressure-test readiness: Does production data meet the same quality standards as your pilot dataset? Is there a named owner for AI-assisted decisions and their outcomes? And does the surrounding process change in a documented, measurable way when the AI system is active?

Author: William Flaiz