Wrappers vs. Reasoning Engines: What I Learned Building the Meridian ICP

Most AI products right now are extraction tools wearing a product costume. Here's the structural difference, and what it takes to cross the line.

There's a distinction worth drawing early in any AI product conversation: the difference between a tool that extracts and a tool that reasons.

An extraction tool takes your input, runs it through a model, and gives you back a cleaned-up version of what you said. A reasoning tool takes your input, runs it through a model, and gives you back something you didn't say but that is true given what you said. The first one is documentation. The second one is analysis.

Most products labeled as AI right now sit on the extraction side. A UI, a prompt, a response, a download button. The model summarizes, classifies, rewrites, formats. What you get out is a function of what you put in.

That's a legitimate category. It's also a crowded one, and it's the category most investors mean when they say the AI wrapper market is going to collapse. The moat is thin because the work is thin. Any team with API access can ship a similar product in a week.

Reasoning engines are structurally different. They're harder to build, harder to explain, and much harder to replicate. Meridian ICP (meridianicp.com) I shipped this year is one of them. This piece walks through what actually makes that distinction real, using that build as the case study.

What reasoning requires that extraction doesn't

Extraction is a one-shot operation. User input goes in, model output comes out, done. The prompt does not need to track anything over time. It does not need to compare one piece of input against another. It does not need to notice when two things a user said contradict each other. It just needs to produce a good-looking response to whatever is in the context window.

Reasoning requires at least four things extraction doesn't:

- State. The model has to know what it has already collected, what it still needs, and what conclusions it has drawn. That state has to persist across turns and has to survive model outputs that might try to re-ask or skip questions.

- Minimum evidence thresholds. A reasoning tool cannot treat a single example as a pattern. If a user says "our deals usually come from referrals," that's a hypothesis. Three specific referral sources with named companies and timelines is evidence. The system has to enforce the difference.

- Contradiction surfacing. When the user says one thing and then says something else that contradicts it, the system has to notice and flag it rather than quietly resolving in favor of whatever was said most recently. This is the hardest part and the most valuable.

- Confidence calibration. Not every conclusion the system produces deserves equal weight. Some are backed by many examples. Some are inferred from one answer. The system has to know the difference and has to communicate it to the user.

None of these are solved by picking a bigger model. They are solved by architecture.

The two-layer prompt chain

The first architectural decision in Meridian ICP was splitting the work into two distinct model calls with two distinct jobs.

Layer 1 is the Intake Conductor. Its only job is to run a structured conversation. One question at a time. Adaptive follow-ups based on the previous answer. State tracking across six ICP dimensions (customer profile, buying triggers, evaluation criteria, objection and loss patterns, channel and discovery, language and messaging). Minimum example thresholds enforced inside each dimension before moving on.

The Intake Conductor is explicitly told what it is not allowed to do. It is not allowed to evaluate answers. It is not allowed to say "that's helpful" or "great." It is not allowed to combine two questions into one. It is not allowed to announce transitions between dimensions. It is not allowed to synthesize or draw conclusions during the interview. When all six dimensions are covered, it emits a specific string:

[INTAKE_COMPLETE]

. That string is the handoff signal.

Layer 2 is the Report Synthesizer. It runs once. It takes the full transcript from Layer 1 and produces a structured JSON report. It is not allowed to ask questions. It is not allowed to invent data. It is instructed to extract patterns across multiple examples (never report a single data point as a pattern), to surface the gap between what prospects say and what actually drives decisions, to classify buying triggers by urgency, and to flag dimensions where the data is thin.

Splitting the work matters because the two jobs require opposite cognitive stances. Intake requires a model that holds back, asks, listens, tracks. Synthesis requires a model that commits, infers, contradicts, concludes. A single prompt trying to do both produces a model that does neither well. You get either an over-eager interviewer that starts analyzing mid-conversation, or a cautious analyst that keeps asking more questions instead of drawing conclusions.

The handoff string (

[INTAKE_COMPLETE]

) is load-bearing. It's the boundary between the two cognitive modes. It's also a useful debugging tool: if the Intake Conductor emits it early, you know the state tracking is broken; if it refuses to emit it, you know the minimum thresholds are too strict.

State tracking in practice

The Intake Conductor's system prompt carries an explicit state-tracking instruction block. It knows:

- Which of the six dimensions it is currently in

- What has already been covered

- What still needs to be collected before moving on

- When an answer naturally covers a follow-up topic (skip it)

Three of the six dimensions enforce minimum example thresholds. Buying triggers requires three specific examples before advancing. Objection and loss patterns requires three early objections, three late-stage stalls, and three loss stories. Channel and discovery requires three closed deals with named sources. The intake will not advance past these dimensions until the thresholds are met.

This is not a retrieval problem or a vector database problem. It's prompt engineering plus conversation design. The model tracks state the way a good interviewer does: by keeping count and by noticing when an answer was too vague to count.

What this buys you, structurally, is a conversation that produces analyzable data instead of narrative. The Report Synthesizer gets a transcript with three named buying triggers, three specific loss stories, three actual deal sources with timelines, rather than a free-form essay about how the user's customers generally behave.

You cannot reason across vague data. State tracking and threshold enforcement are what turn conversation into evidence.

Contradiction surfacing, or: why this isn't just a fancy form

This is the part that separates Meridian ICP from every templated ICP tool on the market, and it's the part that took me the longest to get right.

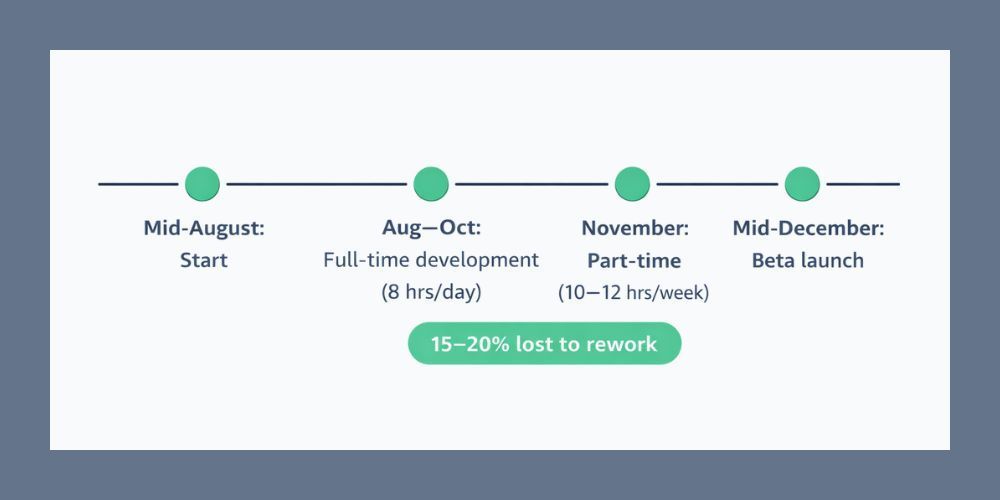

In the February 2026 beta test, the product shipped as a readout, not an analysis. It organized what the user said. It extracted. It did not reason. A consultant producing that same output would have been politely asked to leave.

The failure mode was specific. A user would describe their stated evaluation criteria in Dimension 3 ("prospects say they care most about integration depth and implementation timeline"). Later, in Dimension 3's follow-up on actual win patterns, the same user would describe closed deals that were really won on relationship with the account executive and pricing flexibility. Two different answers. Both from the same user. Both in the same interview.

The first version of the Synthesizer treated both answers as equally valid inputs and produced a report that listed both as criteria, side by side, with no notice that they contradicted each other. The user would read the report and think, "That looks right," because both statements were, individually, things they had said.

That's the extraction failure mode. The system faithfully reproduced the input without noticing that the input contradicted itself.

The rebuild instructed the Synthesizer to do one specific thing differently: when you detect a contradiction in the transcript, flag it explicitly as an insight. Name it. Give it a specific label. I gave it the name ICP Drift: the gap between what a B2B team says drives their ideal customer purchases and what the closed-won data actually shows.

Once ICP Drift had a name, the Synthesizer could surface it as a section in the report rather than treating it as noise. That single prompt change did more for the product than any other improvement. It's the difference between a tool that produces documentation and a tool that produces analysis.

Confidence scoring as an output primitive

The second beta test lesson was about uniform confidence. The original Synthesizer treated every finding in the report with the same authority. A buying trigger backed by three detailed examples looked identical on the page to a buying trigger inferred from a single sentence. That's dangerous because it hides the weakest parts of the report inside the strongest ones.

The rebuild labeled each finding with a confidence classification:

- High Confidence: backed by three or more specific examples

- Medium Confidence: backed by two examples

- Thin Data: backed by one example or inferred

The classification is calculated by the Synthesizer based on what it finds in the transcript and is rendered visibly in the report. A section labeled Thin Data is a section the user should come back and add context to before acting on it. A section labeled High Confidence is something they can act on Monday.

Confidence scoring changes the relationship between the tool and the user. Without it, the user either trusts everything or distrusts everything. With it, the user knows where to invest more attention. That's how a senior strategist behaves in a readout meeting. That's what the product needed to emulate.

This is also what makes the product a living document rather than a one-time deliverable. A report with confidence scoring invites the user back to strengthen the weak sections. A report without it doesn't.

The shape of a reasoning engine

Pulling the pieces together, here's the structural shape of what separates a reasoning engine from a wrapper:

A wrapper is: one prompt, one call, one response. Input goes in, output comes out. The model does the work the user asked for, directly.

A reasoning engine is: at least two model calls with different cognitive roles, state tracked across turns, minimum evidence thresholds enforced before advancing, contradictions between inputs surfaced explicitly as insights, confidence calibrated and communicated in the output.

The code difference is not large. A wrapper might be 200 lines. A reasoning engine with the features above might be 800. The architectural difference is what makes the second one defensible. The first one is reproducible. The second one requires a designer who understands both the model's tendencies and the domain's reasoning patterns well enough to build the right scaffolding around the model.

In Meridian ICP, that scaffolding came from two decades of doing the same ICP work with consulting clients and noticing where the conversation broke down. The architecture is not an academic exercise. It's an encoding of how the best version of that conversation actually works.

Why this matters for anyone shipping AI products right now

The current AI product market has a saturation problem at the wrapper layer. Every week there are five new tools that summarize meetings, rewrite emails, or generate personas. They compete on price, UI, and marketing. They do not compete on reasoning because they don't do any.

The products that will still be alive in three years are the ones that solved a problem with structure, not just with access to an API. State tracking. Multi-step prompt chains with distinct cognitive roles. Evidence thresholds. Contradiction surfacing. Confidence calibration. These are not features. They are the anatomy of a product that does actual work.

If you are building in this space, the question worth sitting with is whether your product can survive a user saying two things that conflict. A wrapper can't. A reasoning engine has to. Designing for that moment, explicitly, is where most of the durable value in AI products lives right now.

Connect

If you are building AI products and want to talk architecture, I'm easy to find. Two-layer prompt chains, state tracking, and the question of what makes a product reason rather than extract are things I think about most days. Happy to compare notes.

You can see Meridian ICP at meridianicp.com.

What's the difference between an AI wrapper and a reasoning engine?

A wrapper is one prompt, one API call, one response. Input goes in, output comes out. A reasoning engine uses multiple model calls with distinct cognitive roles, tracks state across turns, enforces minimum evidence thresholds, surfaces contradictions between inputs, and calibrates confidence in its outputs. The code difference isn't huge. The architectural difference is what makes the reasoning engine defensible.

Why split an AI product into two separate prompts instead of one?

Different cognitive tasks require opposite stances. Interviewing requires a model that holds back, asks, listens, and tracks. Synthesizing requires a model that commits, infers, contradicts, and concludes. A single prompt trying to do both produces a model that does neither well. Splitting them lets each prompt optimize for its actual job.

What is ICP Drift?

ICP Drift is the gap between what a B2B team says drives their ideal customer purchases and what their closed-won data actually shows. Stated evaluation criteria and actual win patterns almost always diverge. The ICP Engine surfaces this gap explicitly as a report section rather than quietly resolving in favor of whichever answer the user gave most recently.

Author: William Flaiz